In general, you are developing Spark application using Scala, Python, or R. But do not panic if you are a C# developer. .NET for Apache Spark provides high-level APIs for using Spark from C#.

https://learn.microsoft.com/en-us/dotnet/spark/

Features

https://learn.microsoft.com/en-us/dotnet/spark/what-is-apache-spark-dotnet

- DataFrame and SparkSQL for working with structured data.

- Spark Structured Streaming for working with streaming data.

- Spark SQL for writing queries with SQL syntax.

- Machine learning integration for faster training and prediction

Prepare your environment

You can find the detailed instruction in the following link or follow the steps below.

Tutorial: Get started with .NET for Apache Spark

Install .NET SDK

- Download and install the latest .NET SDK

- If you have installed Visual Studio, .NET SDK is already installed.

- Add the dotnet.exe to your Path.

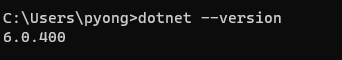

- Verify the .NET SDK

Install Java, 7Zip, Apache Spark, and winutils.exe

Follow the “Install PySpark on Windows” documentation to install Spark.

[Note] Install JDK 8. JDK 9+ does not work. Use this link to download JDK8.

https://adoptium.net/temurin/releases/?version=8

[Note] Beware of the version of Apache Spark that .NET supports. You might need to choose the specific version rather than the latest one.

Install .NET for Apache Spark

- Go to the Microsoft.Spark.Worker download page and download the most recent version such as “Microsoft.Spark.Worker.netcoreapp3.1.win-x64-2.1.1.zip”.

- Unzip the file in the folder of your choice, such as “C:\Apps”

- Set the environment variable.

DOTNET_WORKER_DIR = C:\Apps\Microsoft.Spark.Worker-2.1.1

Change the Default Spark Logging Level

If you run the application in the tutorial, you see a lot of logging messages in the console.

You can change the default logging level like this.

- At first open the File Explorer and move to the “%SPARK_HOME%/conf” folder

- Copy the “log4j.properties.template” file in the same folder and rename it as “log4j.properties“.

- Open the file in the text editor and change the following line

log4j.rootCategory=INFO, console

to

log4j.rootCategory=WARN, console

You can use the following logging levels:

- ALL , DEBUG , ERROR , FATAL , INFO , OFF , TRACE , WARN